Video & Podcast

15th January 2026

This article continues our exploration of modern distributed systems for capital markets by moving from design principles to concrete implementation choices around sequencer architectures. The on-demand recording includes detailed diagrams, timelines, and walkthroughs for deeper study.

If you read the first post on ‘Building consistent, low‑latency distributed systems for capital markets’, this is the practical follow‑on; if you did not, this article stands alone with a deeper focus on how to structure sequencer architectures as deterministic state machines, recover quickly from faults, and keep latency in check at scale.

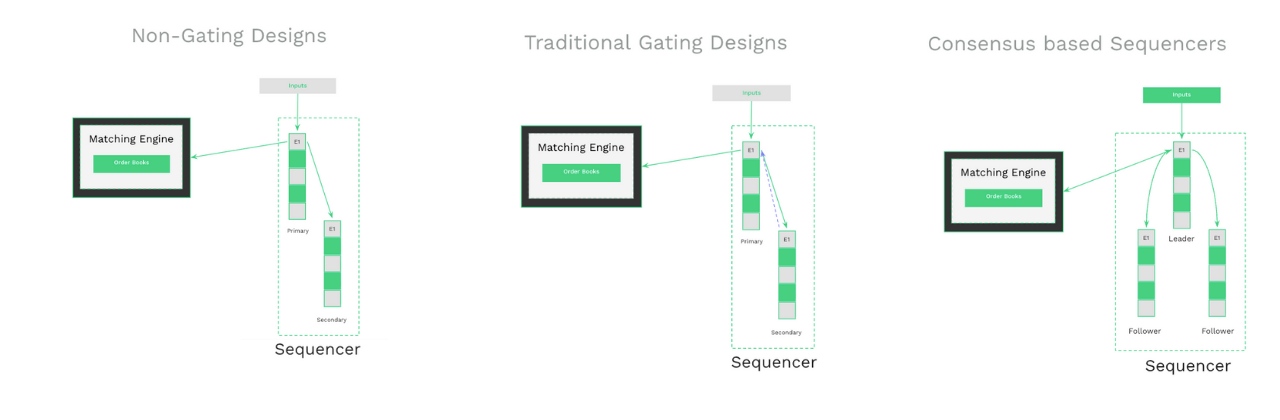

There are several ways to build a sequencer, each making different trade‑offs across latency, availability, and data safety. Broadly, the designs fall into three families:

Taken together: non‑gating designs optimize for speed but accept loss; gating reduces loss but adds synchronization and manual operations; consensus‑based sequencing combines ordered durability with automated failover to balance availability and performance in production environments.

With these sequencing options in view, the next question is how to keep state consistent under load and maintain predictable performance. In trading a component should always agree on the same state at the same logical time; consistency is an important safety property of modern trading systems.

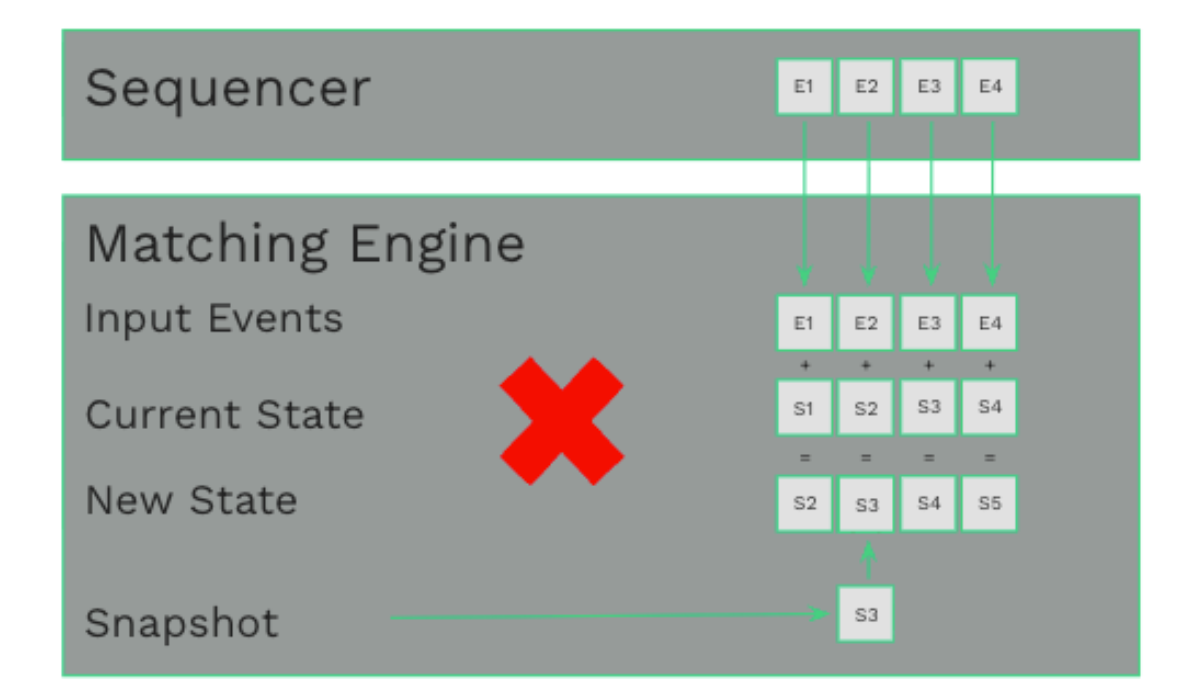

Modelling systems as deterministic state machines that apply ordered inputs from the sequencer to transition state and produce output achieves this. The internal state of these systems remain consistent across restarts and produce the same side effects.

By leveraging the sequencer to deliver inputs to multiple instances of the same state machine we are able to achieve state machine replication (SMR). Given the same starting state and identical order of inputs, every replica evolves to the same state and emits the same outputs. This allows us to model core domains (orders, accounts, order books) and offer advanced availability models, such as active/active with predictable recovery and auditability.

In practice, applications modelled using state machine replication consume only from that log, avoiding non‑deterministic inputs (wall‑clock time, randomness, unordered iteration), and start from the same initial state. This combination produces a consistent computation history that is testable, reproducible, and explainable across environments and time.

With ordering and determinism in place, availability becomes an orchestration problem. Deterministic domains (risk, matching, positions) can run active/active: multiple replicas consume the same ordered inputs and will produce the same outputs; the sequencer handles de‑duplication of inputs so losing one replica does not change externally visible results. At the ingress edge, FIX and WebSocket gateways are inherently non‑deterministic because they accept inputs outside the sequencer. These can be operated as active/warm pairs, with the sequencer managing promotion/demotion on failure and return to service without leaking non‑determinism into core state machines.

Once domains are modeled as state machines, a global ordered log becomes the backbone that preserves causality across services. Consider a typical flow:

A new order is sequenced; the risk domain validates and reserves funds and emits a downstream “order” event; the matching domain fills and emits an execution report; then risk finalizes accounting and order state. Because each service consumes the same ordered sequence and runs deterministic logic, replicas converge to the same state and publish identical outputs, which the sequencer can de‑duplicate before distribution to observers and gateways. The causality and timing relationships remain explicit and replayable, which simplifies reasoning and recovery.

Even with fast logs, replaying millions of events during restart is rarely acceptable. The remedy is snapshots of in‑memory state taken at safe points. On failure, this allows you to restore the latest snapshot and replay only subsequent events from that safe point. This shifts recovery from “replay the entire log” to “load snapshot + apply delta since snapshot,” which materially reduces time‑to‑service.

For host‑level or zonal failures where the local snapshot is gone, snapshot durability should not rely on a single machine or component. Snapshots can be persisted and replicated to multiple locations, which may include—but do not have to be limited to—the sequencer itself. The key requirement is that, after a leader election or any infrastructure failover, a surviving store can serve the same point‑in‑time snapshot to any recovering replica so all peers restart from an identical state. Decoupling snapshot storage from the ordered log gives operators flexibility in placement, capacity planning, and recovery topology, while ensuring that snapshots themselves are not a single point of failure.

Sequencer consensus will incur additional network costs, but those can be effectively managed with various advanced techniques to meet trading targets.

Together, these techniques support tens‑of‑microseconds targets at institutional message rates while keeping p99 and beyond under control.

Let’s summarize what we have learned about high-performance sequencer architectures: a production‑grade platform for capital markets should exhibit a number of none-negotiable characteristics and quality attributes:

Taken together, these properties align consistency, availability, and performance (rather than forcing a trade-off decision between them), making sequencer architectures the solid, scalable choice for future capital markets architectures.

FURTHER READING

Video & Podcast

Blog & Reports

Blog & Reports

Media & Awards